1. About the Documentation

This section provides a brief overview of Reactor reference documentation. You can read this reference guide in a linear fashion, or you can skip sections if something doesn’t interest you.

1.1. Latest version & Copyright Notice

The Reactor reference guide is available as html documents. The latest copy is available at http://projectreactor.io/docs/core/release/reference/docs/index.html

Copies of this document may be made for your own use and for distribution to others, provided that you do not charge any fee for such copies and further provided that each copy contains this Copyright Notice, whether distributed in print or electronically.

1.2. Getting help

There are several ways to reach out for help with Reactor.

-

Get in touch with the community on Gitter.

-

Ask a question on stackoverflow.com, the tag is

project-reactor. -

Report bugs (or ask questions) in github issues, the most relevant repositories that are most monitored are reactor-core and reactor-addons (which covers reactor-test and adapters issues)

| All of Reactor is open source, including this documentation! If you find problems with the docs or if you just want to improve them, please get involved. |

1.3. Where to go from here

-

Head to Getting started if you feel like jumping straight into the code.

-

If you’re new to Reactive Programming though, you’d probably better start with the Introduction to Reactive Programming…

-

In order to dig deeper into the core features of Reactor, head to Reactor Core Features:

-

If you’re looking for the right tool for the job but cannot think of a relevant operator, maybe the Which operator do I need? section in there could help?

-

Learn more about Reactor’s reactive types in the "

Flux, an asynchronous sequence of 0-n items" and "Mono, an asynchronous 0-1 result" sections -

Switch threading contexts using a Scheduler.

-

Learn how to handle errors in the Handling Errors section.

-

-

Unit testing? Yes it is possible with the

reactor-testproject! See Testing. -

Programmatically creating a sequence is possible for more advanced creation of reactive sources.

2. Getting started

2.1. Introducing Reactor

Reactor is a fully non-blocking reactive programming foundation for the JVM,

with efficient demand management (backpressure). It integrates directly with

Java 8 functional APIs, notably CompletableFuture, Stream and Duration.

It offers composable asynchronous sequence APIs Flux ([N] elements) and Mono

([0|1] elements), extensively implementing the Reactive Extensions specification.

Reactor also supports non-blocking IPC with the reactor-ipc components.

Suited for Microservices Architecture, Reactor IPC offers backpressure-ready

network engines for HTTP (including Websockets), TCP and UDP. Reactive Encoding/

Decoding is fully supported.

2.2. The BOM

Reactor 3 uses a BOM[1]

model since reactor-core 3.0.4, with the Aluminium release train.

This allows to regroup artifacts that are meant to work well together without having to wonder about the sometimes divergent versioning schemes of these artifacts.

The BOM is like a curated list of versions. It is itself versioned, using a release train scheme with a codename followed by a qualifier:

Aluminium-RELEASE Carbon-BUILD-SNAPSHOT Aluminium-SR1 Carbon-SR32[2]

The codenames represent what would traditionally be the MAJOR.MINOR number. They come from the Periodic Table of Elements (mostly), in growing alphabetical order.

The qualifiers are (in chronological order):

-

BUILD-SNAPSHOT -

M1..N: Milestones or developer previews -

RELEASE: The first GA release in a codename series -

SR1..N: The subsequent GA releases in a codename series (equivalent to PATCH number, SR stands for "Service Release").

2.3. Getting Reactor

As mentioned above, the easiest way to use Reactor in your core is to use the BOM and add the relevant dependencies to your project. Note that when adding such a dependency, you omit the version so that it gets picked up from the BOM.

However, if you want to force the use of a specific artifact’s version, you can specify it when adding your dependency, as you usually would. You can also of course forgo the BOM entirely and always specify dependencies with their artifact versions.

2.3.1. Maven installation

The BOM concept is natively supported by Maven. First, you’ll need to import the

BOM by adding the following to your pom.xml:[3]

<dependencyManagement> (1)

<dependencies>

<dependency>

<groupId>io.projectreactor</groupId>

<artifactId>reactor-bom</artifactId>

<version>Aluminium-SR1</version>

<type>pom</type>

<scope>import</scope>

</dependency>

</dependencies>

</dependencyManagement>| 1 | Notice the dependencyManagement tag, this is in addition to the regular

dependencies section. |

Next, add your dependencies to the relevant reactor projects as usual, except

without a <version>:

<dependencies>

<dependency>

<groupId>io.projectreactor</groupId>

<artifactId>reactor-core</artifactId> (1)

(2)

</dependency>

<dependency>

<groupId>io.projectreactor.addons</groupId>

<artifactId>reactor-test</artifactId> (3)

<scope>test</scope>

</dependency>

</dependencies>| 1 | dependency to the core library |

| 2 | no version tag here |

| 3 | reactor-test provides facilities to unit test reactive streams |

2.3.2. Gradle installation

Gradle has no core support for Maven BOMs, but you can use Spring’s gradle-dependency-management plugin.

First, apply the plugin from Gradle Plugin Portal:

plugins {

id "io.spring.dependency-management" version "1.0.1.RELEASE" (1)

}| 1 | as of this writing, 1.0.1.RELEASE is the latest version of the plugin, check for updates. |

Then use it to import the BOM:

dependencyManagement {

imports {

mavenBom "io.projectreactor:reactor-bom:Aluminium-SR1"

}

}Finally add a dependency to your project without a version number:

dependencies {

compile 'io.projectreactor:reactor-core' (1)

}| 1 | no third : separated section for the version, it is taken from the BOM |

2.3.3. Milestones and Snapshots

Milestones and developer previews are distributed through the Spring Milestones repository rather than Maven Central. Add it to your build configuration file:

<repositories>

<repository>

<id>spring-milestones</id>

<name>Spring Milestones Repository</name>

<url>https://repo.spring.io/milestone</url>

</repository>

</repositories>repositories {

maven { url 'http://repo.spring.io/milestone' }

mavenCentral()

}Similarly, snapshots are also available in a dedicated separate repository:

<repositories>

<repository>

<id>spring-snapshots</id>

<name>Spring Snapshot Repository</name>

<url>https://repo.spring.io/snapshot</url>

</repository>

</repositories>repositories {

maven { url 'http://repo.spring.io/snapshot' }

mavenCentral()

}3. Introduction to Reactive Programming

Reactor is an implementation of the Reactive Programming paradigm, which can be summed up as:

Reactive programming is oriented around data flows and the propagation of change. This means that the underlying execution model will automatically propagate changes through the data flow.

In this particular instance, pioneered by the Reactive Extensions (Rx) library in the .NET ecosystem, and also implemented by RxJava on the JVM, the reactive aspect is translated in our object-oriented languages to a kind of extension of the Observer design pattern.

As time went, a standardization emerged through the Reactive Streams effort,

a specification which defines a set of interfaces and interaction rules for

reactive libraries on the JVM. It will be integrated into Java 9 (with the

Flow class).

One can also compare the main reactive streams pattern with the familiar Iterator

design pattern, as there is a duality to the Iterable-Iterator pair in all

these libraries. One major difference is that while an Iterator is pull based,

reactive streams are push-based.

Using an iterator is quite imperative, even though the method of accessing

values is solely the responsibility of the Iterable. Indeed, it is up to the

developer to choose when to access the next() item in the sequence. In

reactive streams, the equivalent of the above pair is Publisher-Subscriber.

But it is the Publisher that notifies the Subscriber of newly available values

as they come, and this push aspect is key to being reactive. Plus operations

applied to pushed values are expressed declaratively rather than imperatively.

Additionally to pushing values, the error handling and completion aspects are

also covered in a well defined manner, so a Publisher can push new values to

its Subscriber (calling onNext), but also signal an error (calling onError

and terminating the sequence) or completion (calling onComplete and

terminating the sequence).

onNext x 0..N [onError | onComplete]

This approach is very flexible, as the pattern applies indifferently to use cases where there is at most one value, n values or even an infinite sequence of values (for instance the ticks of a clock).

But let’s step back a bit and reflect on why we would need such an asynchronous reactive library in the first place.

3.1. Blocking can be wasteful

Modern applications nowadays can reach huge scales of users, and even though the capabilities of modern hardware have continued to improve, performance of the modern software is still a key concern.

There are broadly two ways one can improve a program’s performance:

-

parallelize: use more threads and more hardware resources

and/or -

seek more efficiency in how current resources are used.

Usually, Java developers will naturally write program using blocking code. This is all well until there is a performance bottleneck, at which point the time comes to introduce additional thread(s), running similar blocking code. But this scaling in resource utilization can quickly introduce contention and concurrency problems.

Worse! If you look closely, as soon as a program involves some latency (notably I/O, like a database request or a network call), there is a waste of resources in the sense that the thread now sits idle, waiting for some data.

So the parallelization approach is not a silver bullet: although it is necessary in order to access the full power of the hardware, it is also complex to reason about and susceptible to resource wasting…

3.2. Asynchronicity to the rescue?

The second approach described above, seeking more efficiency, can be a solution to that last problem. By writing asynchronous non-blocking code, you allow for the execution to switch to another active task using the same underlying resources, and to later come back to the current "train of thought" when the asynchronous processing has completed.

But how can you produce asynchronous code on the JVM?

Java offers mainly two models of asynchronous programming:

-

Callbacks: asynchronous methods don’t have a return value but take an extra

callbackparameter (a lambda or simple anonymous class) that will get called when the result is available. Most well known example is Swing’sEventListenerhierarchy. -

Futures: asynchronous methods return a

Future<T>immediately. The asynchronous process computes aTvalue, but the future wraps access to it, isn’t immediately valued and can be polled until it becomes valued.ExecutorServicerunningCallable<T>tasks use Futures for instance.

So is it good enough? Well, not for every use cases, and both approaches have limitations…

Callbacks are very hard to compose together, quickly leading to code that is difficult to read and maintain ("Callback Hell").

Let’s take an example: showing top 5 favorites from a user on the UI, or suggestions if he/she doesn’t have any favorite. This goes through 3 services (one gives favorite IDs, the other fetches favorite details, while the third offers suggestions with details):

userService.getFavorites(userId, new Callback<List<String>>() { (1)

public void onSuccess(List<String> list) { (2)

if (list.isEmpty()) { (3)

suggestionService.getSuggestions(new Callback<List<Favorite>>() {

public void onSuccess(List<Favorite> list) { (4)

UiUtils.submitOnUiThread(() -> { (5)

list.stream()

.limit(5)

.forEach(uiList::show); (6)

});

}

public void onError(Throwable error) { (7)

UiUtils.errorPopup(error);

}

});

} else {

list.stream() (8)

.limit(5)

.forEach(favId -> favoriteService.getDetails(favId, (9)

new Callback<Favorite>() {

public void onSuccess(Favorite details) {

UiUtils.submitOnUiThread(() -> uiList.show(details));

}

public void onError(Throwable error) {

UiUtils.errorPopup(error);

}

}

));

}

}

public void onError(Throwable error) {

UiUtils.errorPopup(error);

}

});| 1 | We have callback-based services: a Callback interface with a method invoked

when the async process was successful and one invoked in case of an error. |

| 2 | The first service invokes its callback with the list of favorite IDs. |

| 3 | If the list is empty, we must go to suggestionService… |

| 4 | …and it gives a List<Favorite> to a second callback. |

| 5 | Since we’re dealing with UI we need to ensure our consuming code will run in the UI thread. |

| 6 | We use Java 8 Stream to limit the number of suggestions processed to 5, and

we show them in a graphical list in the UI. |

| 7 | At each level we’ll repeatedly deal with errors the same way: show them in a popup. |

| 8 | Back to the favorite ID level: if the service returned a full list, then we

need to go to the favoriteService to get detailed Favorite objects. Since we

only want 5 of them, we first stream the list of IDs to limit it to 5. |

| 9 | Once again, a callback. This time we get a fully-fledged Favorite object

that we’ll push to the UI inside the UI thread. |

That’s a lot of code, a bit hard to follow and with repetitive parts.Compare it with its equivalent in Reactor:

userService.getFavorites(userId) (1)

.flatMap(favoriteService::getDetails) (2)

.switchIfEmpty(suggestionService.getSuggestions()) (3)

.take(5) (4)

.publishOn(UiUtils.uiThreadScheduler()) (5)

.subscribe(uiList::show, UiUtils::errorPopup); (6)| 1 | We start with a flow of favorite IDs. |

| 2 | We asynchronously transform these into detailed Favorite objects (flatMap).

We now have a flow of Favorite. |

| 3 | In case the flow of Favorite is empty, we switch to a fallback through the

suggestionService. |

| 4 | We are only interested in at most 5 elements from the resulting flow. |

| 5 | At the end, we want to process each piece of data in the UI thread. |

| 6 | We trigger the flow by describing what to do with the final form of the data (show it in a UI list) and what to do in case of an error (show a popup). |

What if you wanted to ensure the favorite IDs are retrieved in less than 800ms,

and otherwise get them from a cache? In the callback-based code, that looks like

a complicated task… But in Reactor it becomes as easy as adding a timeout

operator in the chain:

userService.getFavorites(userId)

.timeout(Duration.ofMillis(800)) (1)

.onErrorResume(cacheService.cachedFavoritesFor(userId)) (2)

.flatMap(favoriteService::getDetails) (3)

.switchIfEmpty(suggestionService.getSuggestions())

.take(5)

.publishOn(UiUtils.uiThreadScheduler())

.subscribe(uiList::show, UiUtils::errorPopup);| 1 | If the part above emits nothing for more than 800ms, propagate an error… |

| 2 | …and in case of any error from above, fallback to the cacheService. |

| 3 | The rest of the chain is similar to the original example. |

Futures are a bit better, but they are still not so good at composition, despite

the improvements brought in Java 8 by CompletableFuture… Orchestrating

multiple futures together is doable, but not that easy. Plus it is very (too?)

easy to stay in familiar territory and block on a Future by calling their

get() method. And lastly, they lack the support for multiple values and

advanced error handling.

Let’s take another example: we get a list of IDs from which we want to fetch a name and some stat and combine these pair-wise, all of it asynchronously.

CompletableFuture combinationCompletableFuture<List<String>> ids = ifhIds(); (1)

CompletableFuture<List<String>> result = ids.thenComposeAsync(l -> { (2)

Stream<CompletableFuture<String>> zip =

l.stream().map(i -> { (3)

CompletableFuture<String> nameTask = ifhName(i); (4)

CompletableFuture<Integer> statTask = ifhStat(i); (5)

return nameTask.thenCombineAsync(statTask, (name, stat) -> "Name " + name + " has stats " + stat); (6)

});

List<CompletableFuture<String>> combinationList = zip.collect(Collectors.toList()); (7)

CompletableFuture<String>[] combinationArray = combinationList.toArray(new CompletableFuture[combinationList.size()]);

CompletableFuture<Void> allDone = CompletableFuture.allOf(combinationArray); (8)

return allDone.thenApply(v -> combinationList.stream()

.map(CompletableFuture::join) (9)

.collect(Collectors.toList()));

});

List<String> results = result.join(); (10)

assertThat(results).contains(

"Name NameJoe has stats 103",

"Name NameBart has stats 104",

"Name NameHenry has stats 105",

"Name NameNicole has stats 106",

"Name NameABSLAJNFOAJNFOANFANSF has stats 121");| 1 | We start off a future that gives us a list of ids to process. |

| 2 | We want to start some deeper asynchronous processing once we get the list. |

| 3 | For each element in the list… |

| 4 | First we’ll asynchronously get the associated name… |

| 5 | Then we’ll asynchronously get the associated task… |

| 6 | And we’ll combine both results. |

| 7 | We now have a list of futures that represent all the combination tasks. In order to execute these tasks, we need to convert the list to an array… |

| 8 | …and pass it to CompletableFuture.allOf, which outputs a future that completes

when all tasks have completed. |

| 9 | The tricky bit is that allOf returns CompletableFuture<Void>, so we reiterate

over the list of futures, collecting their result via join() (which here doesn’t block

since allOf has ensure the futures are all done). |

| 10 | Once the whole asynchronous pipeline has been triggered, we wait for it to be processed and return the list of results that we can assert. |

Since Reactor has more combination operators out of the box, this can be simplified:

Flux<String> ids = ifhrIds(); (1)

Flux<String> combinations =

ids.flatMap(id -> { (2)

Mono<String> nameTask = ifhrName(id); (3)

Mono<Integer> statTask = ifhrStat(id); (4)

return nameTask.and(statTask, (5)

(name, stat) -> "Name " + name + " has stats " + stat);

});

Mono<List<String>> result = combinations.collectList(); (6)

List<String> results = result.block(); (7)

assertThat(results).containsExactly( (8)

"Name NameJoe has stats 103",

"Name NameBart has stats 104",

"Name NameHenry has stats 105",

"Name NameNicole has stats 106",

"Name NameABSLAJNFOAJNFOANFANSF has stats 121"

);| 1 | This time we’ll start from an asynchronously provided sequence of ids (a Flux<String>) |

| 2 | For each element in the sequence, we’ll asynchronously process it (flatMap) twice: |

| 3 | First we’ll get the associated name |

| 4 | Second we’ll get the associated stat |

| 5 | We are actually interested in asynchronously combining these 2 values |

| 6 | Furthermore, we’d like to aggregate the values into a List as they become available. |

| 7 | In production, we’d continue working with the Flux asynchronously by further combining

it or subscribing to it. Since we’re in a test, we’ll block waiting for the processing to finish

instead, directly returning the aggregated list of values. |

| 8 | We are now ready to assert the result. |

These caveats of Callback and Future seem familiar: aren’t they what Reactive Programming directly tries to

address with the Publisher-Subscriber pair?

3.3. From Imperative to Reactive Programming

Indeed, reactive libraries like Reactor aim at addressing these drawbacks of "classic" asynchronous approaches on the JVM, while also focusing on a few additional aspects. To sum it up:

-

Composability and readability

-

Data as a flow manipulated using a rich vocabulary of operators

-

Nothing happens until you subscribe

-

Backpressure or the ability for the consumer to signal the producer that the rate of emission is too high for it to keep up

-

High level but high value abstraction that is concurrency-agnostic

3.4. Composability and readability

By composability, we mean the ability to orchestrate multiple asynchronous tasks together, using results from previous tasks to feed input to subsequent ones, or executing several tasks in a fork-join style, as well as reusing asynchronous tasks as discrete components in an higher level system.

This is tightly coupled to readability and maintainability of one’s code, as these layers of asynchronous processes get more and more complex. As we saw, the callback model is simple, but one of its main drawbacks is that for complex processes you need to have a callback executed from a callback, itself nested inside another callback, and so on…

That is what is referred to as Callback Hell. And as you can guess (or know from experience), such code is pretty hard to go back to and reason about.

Reactor on the other hand offers rich composition options where code mirrors the organization of the abstract process, and everything is kept at the same level (no nesting if it is not necessary).

3.5. The assembly line analogy

You can think of data processed by a reactive application as moving through

an assembly line. Reactor is the conveyor belt and working stations. So the

raw material pours from a source (the original Publisher) and ends up as a

finished product ready to be pushed to the consumer (or Subscriber).

It can go to various transformations and other intermediary steps, or be part of a larger assembly line that aggregates intermediate pieces together.

Finally, if there is a glitch or a clogging at one point (for example boxing the products takes a disproportionately long time), the workstation can signal that upstream and limit the flow of raw material.

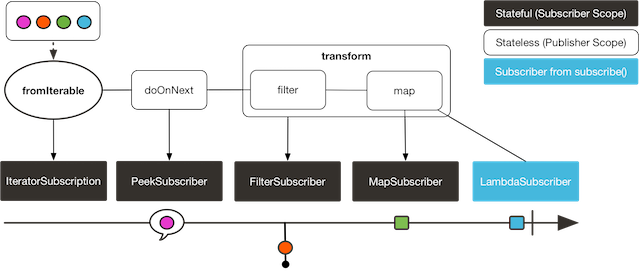

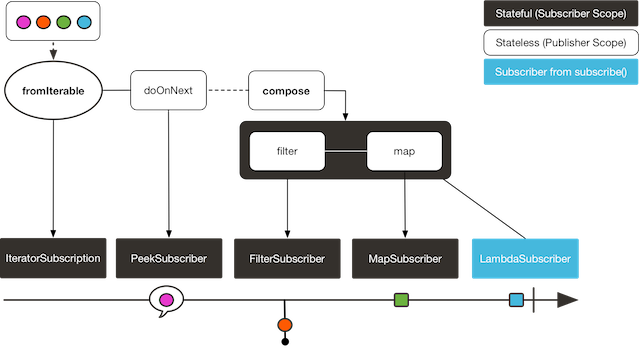

3.6. Operators

In Reactor, operators are what we represented in the above analogy as the

assembly line’s workstations. Each operator adds behavior to a Publisher, and

it actually wraps the previous step’s Publisher into a new instance.

The whole chain is thus layered, like an onion, where data originates from the

first Publisher in the center and moves outward, transformed by each layer.

| Understanding this can help you avoid a common mistake that would lead you to believe that an operator you used in your chain is not being applied. See this item in the FAQ. |

While the Reactive Streams specification doesn’t specify operators at all, one of the high added values of derived reactive libraries like Reactor is the rich vocabulary of operators that they bring along. These cover a lot of ground, from simple transformation and filtering to complex orchestration and error handling.

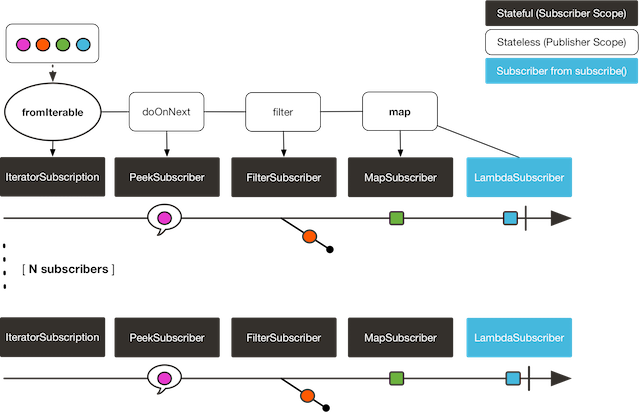

3.7. Nothing happens until you subscribe()

In Reactor when you write a Publisher chain, data doesn’t start pumping into

it by default. Instead, what you have is a abstract description of your

asynchronous process (which can help with reusability and composition by the

way).

By the act of subscribing, you tie the Publisher to a Subscriber, which

triggers the flow of data in the whole chain. This is achieved internally by a

single request signal from the Subscriber that is propagated upstream, right

back to the source Publisher.

3.8. Backpressure

The same mechanism is in fact used to implement backpressure, which we described in the assembly line analogy as a feedback signal sent up the line when a working station is slower to process than the upstream.

The real mechanism defined by the Reactive Streams specification is pretty close

to the analogy: a subscriber can work in unbounded mode and let the source

push all the data at its fastest achievable rate, but can also use the request

mechanism to signal the source that it is ready to process at most n elements.

Intermediate operators can also change the request in-flight. Imagine a buffer

operator that groups elements in batches of 10. If the subscriber requests 1

buffer, then it is acceptable for the source to produce 10 elements. Prefetching

strategies can also be applied is producing the elements before they are

requested is not too costly.

This transforms the push model into a push-pull hybrid where the downstream can pull n elements from upstream if they are readily available, but if they’re not then they will get pushed by the upstream whenever they are produced.

3.9. Hot vs Cold

In the Rx family of reactive libraries, one can distinguish two broad categories of reactive sequences: hot and cold. This distinction mainly has to do with how the reactive stream reacts to subscribers:

-

a Cold sequence will start anew for each

Subscriber, including at the source of data. If the source wraps an HTTP call, a new HTTP request will be made for each subscription -

a Hot sequence will not start from scratch for each

Subscriber. Rather, late subscribers will receive signals emitted after they subscribed. Note however that some hot reactive streams can cache or replay the history of emissions totally or partially… From a general perspective, a hot sequence will emit whether or not there are some subscribers listening.

For more information on hot vs cold in the context of Reactor, see this reactor-specific section.

4. Reactor Core Features

reactor-core is the main artifact of the project, a reactive library that

focuses on the Reactive Streams specification and targets Java 8.

Reactor introduces composable reactive types that implement Publisher but also

provide a rich vocabulary of operators, Flux and Mono. The former represents

a reactive sequence of 0..N items, while the later represents a single-valued-or-empty

result.

This distinction allows to carry a bit of semantic into the type, indicating the

rough cardinality of the asynchronous processing. For instance, an HTTP request

only produces one response so there wouldn’t be much sense in doing a count

operation… Expressing the result of such an HTTP call as a

Mono<HttpResponse> thus makes more sense than as a Flux<HttpResponse>, as it

offers only operators that are relevant to a "zero or one item" context.

In parallel, operators that change the maximum cardinality of the processing

will also switch to the relevant type. For instance the count operator exists

in Flux, but returns a Mono<Long>.

4.1. Flux, an asynchronous sequence of 0-n items

A Flux<T> is a standard Publisher<T> representing an asynchronous sequence

of 0 to N emitted items, optionally terminated by either a success signal or an

error.

As in the RS spec, these 3 types of signal translate to calls to downstream’s

onNext, onComplete or onError methods.

With this large scope of possible signal, Flux is the general-purpose reactive

type. Note that all events, even terminating ones, are optional: no onNext event

but an onComplete event represents an empty finite sequence, but remove the

onComplete and you have an infinite empty sequence. Similarly, infinite

sequences are not necessarily empty: Flux.interval(Duration) produces a

Flux<Long> that is infinite and emits regular ticks from a clock.

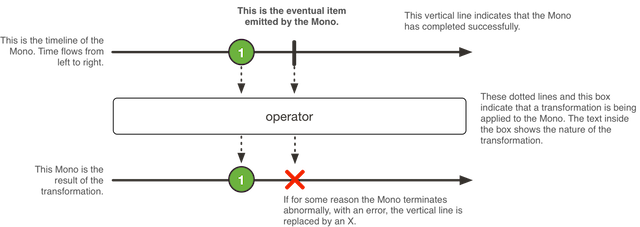

4.2. Mono, an asynchronous 0-1 result

A Mono<T> is a specialized Publisher<T> that emits at most one item then

optionally terminates with an onComplete signal or an onError.

As such it offers only a relevant subset of operators. For instance, combination

operators can either ignore the right hand-side emissions and return another

Mono or emit values from both sides, in which case they’ll switch to a Flux.

Note that a Mono can be used to represent no-value asynchronous processes that

only have the concept of completion (think Runnable): just use an empty

Mono<Void>.

4.3. Simple ways to create a Flux/Mono and to subscribe to it

The easiest way to get started with Flux and Mono is to use one of the

numerous factory methods found in their respective classes.

For instance, to create a simple sequence of String, you can either enumerate

them or put them in a collection and create the Flux from it:

Flux<String> seq1 = Flux.just("foo", "bar", "foobar");

List<String> iterable = Arrays.asList("foo", "bar", "foobar");

Flux<String> seq2 = Flux.fromIterable(iterable);Other examples of factory methods include:

Mono<String> noData = Mono.empty(); (1)

Mono<String> data = Mono.just("foo");

Flux<Integer> numbersFromFiveToSeven = Flux.range(5, 3); (2)| 1 | notice the factory method honors the generic type even though there will be no value |

| 2 | the subtlety is that the first parameter is the start of the range, while the second parameter is the number of items to produce. |

When it comes to subscribing, Flux and Mono make use of Java 8 lambdas. You

have a wide choice of .subscribe() variants that take lambdas for different

combinations of callbacks:

Fluxsubscribe(); (1)

subscribe(Consumer<? super T> consumer); (2)

subscribe(Consumer<? super T> consumer,

Consumer<? super Throwable> errorConsumer); (3)

subscribe(Consumer<? super T> consumer,

Consumer<? super Throwable> errorConsumer,

Runnable completeConsumer); (4)

subscribe(Consumer<? super T> consumer,

Consumer<? super Throwable> errorConsumer,

Runnable completeConsumer,

Consumer<? super Subscription> subscriptionConsumer); (5)| 1 | Just subscribe and trigger the sequence. |

| 2 | Do something with each produced value. |

| 3 | Deal with values but also react to an error. |

| 4 | Deal with values, errors but also execute some code when the sequence successfully completes. |

| 5 | Deal with values, errors, successful completion but also do something with

the Subscription produced by this subscribe call. |

These variants return a reference to the subscription that one can use to

cancel said subscription when no more data is needed. Upon cancellation, the

source should stop producing values and clean up any resources it created. This

cancel and clean-up behavior is represented in Reactor by the general-purpose

Disposable interface.

|

These are convenience variant over the Reactive Streams defined subscribe:

subscribe(Subscriber<? super T> subscriber);That last variant is useful if you already have a Subscriber handy, but more

often you’ll need it because you want to do something subscription-related in

the other callbacks. Most probably, that’d be dealing with backpressure and

triggering the requests yourself.

In that case, you can ease things up by using the BaseSubscriber abstract

class, which offers convenience methods for that:

BaseSubscriber to fine tune backpressureFlux<String> source = someStringSource();

source.map(String::toUpperCase)

.subscribe(new BaseSubscriber<String>() { (1)

@Override

protected void hookOnSubscribe(Subscription subscription) {

(2)

request(1); (3)

}

@Override

protected void hookOnNext(String value) {

request(1); (4)

}

(5)

});| 1 | The BaseSubscriber is an abstract class so we create an anonymous

implementation and specify the generic type. |

| 2 | BaseSubscriber defines hooks for the various signal handling you can

implement in a Subscriber. It also deals with the boilerplate of capturing the

Subscription object so you can manipulate it in other hooks. |

| 3 | request(n) is such a method: it propagates backpressure request to the

capture subscription from any of the hooks. Here we start the stream by

requesting 1 element from the source. |

| 4 | upon receiving a new value, we continue requesting new items from the source one by one. |

| 5 | Other hooks are hookOnComplete, hookOnError, hookOnCancel and

hookFinally (which is always called when the sequence terminates, with the

type of termination passed in as a SignalType parameter). |

When manipulating request like that, you must be careful to produce

enough demand for the sequence to advance or your Flux will get "stuck". That is

the reason why BaseSubscriber forces you to implement the subscription and

onNext hooks, where you should usually call request at least once.

|

BaseSubscriber also offers a requestUnbounded() method to switch to unbounded

mode (equivalent to request(Long.MAX_VALUE).

4.4. Programmatically creating a sequence

In this section, we’ll introduce means of creating a Flux (or Mono) by

programmatically defining its associated events (onNext, onError, onComplete).

All these methods share the fact that they expose an API to trigger

the events that we call a sink. There are actually a few sink variants, as

you will discover below.

4.4.1. Generate

The simplest form of programmatic creation of a Flux is through the generate

method, which takes a generator function.

This is for synchronous and one-by-one emissions, meaning that

the sink is a SynchronousSink and that its next() method can only be called

at most once per callback invocation. You can then additionally call error(Throwable)

or complete().

The most useful variant is probably the one that also allow you to keep a state

that you can refer to in your sink usage to decide what to emit next. The generator

function then becomes a BiFunction<S, SynchronousSink<T>, S>, with <S> the

type of the state object. You have to provide a Supplier<S> for the initial

state, and your generator function now returns a new state on each round.

For instance, you could simply use an int as the state:

generateFlux<String> flux = Flux.generate(

() -> 0, (1)

(state, sink) -> {

sink.next("3 x " + state + " = " + 3*state); (2)

if (state == 10) sink.complete(); (3)

return state + 1; (4)

});| 1 | we supply the initial state value of 0 |

| 2 | we use the state to choose what to emit (a row in the multiplication table of 3) |

| 3 | we also use it to choose when to stop (multiplication tables traditionally stop at times ten) |

| 4 | we return a new state that will be used in next invocation (unless the sequence terminated in this one) |

The code above generates the table of 3, as the following sequence:

3 x 0 = 0 3 x 1 = 3 3 x 2 = 6 3 x 3 = 9 3 x 4 = 12 3 x 5 = 15 3 x 6 = 18 3 x 7 = 21 3 x 8 = 24 3 x 9 = 27 3 x 10 = 30

You can also use a mutable <S>. The example above could for instance be rewritten

using a single AtomicLong as the state, mutating it on each round:

Flux<String> flux = Flux.generate(

AtomicLong::new, (1)

(state, sink) -> {

long i = state.getAndIncrement(); (2)

sink.next("3 x " + i + " = " + 3*i);

if (i == 10) sink.complete();

return state; (3)

});| 1 | this time we generate a mutable object as the state |

| 2 | we mutate the state here |

| 3 | we return the same instance as the new state |

If your state object needs to clean up some resources, use the

generate(Supplier<S>, BiFunction, Consumer<S>) variant to clean up the last

state instance.

|

4.4.2. Create

The more advanced form of programmatic creation of a Flux, create can both

work asynchronously or synchronously and is suitable for multiple emissions per

round.

It exposes a FluxSink, with its next/error/complete methods. Contrary

to generate, it doesn’t have a state-based variant, but on the other hand it

can trigger multiple events in the callback (and even from any thread at a later

point in time).

create can be very useful to bridge an existing API with the reactive

world. For instance, an asynchronous API based on listeners.

|

Imagine that you use an API that is listener-based. It processes data by chunks

and has two events: (1) a chunk of data is ready and (2) the processing is

complete (terminal event), as represented in the MyEventListener interface:

interface MyEventListener<T> {

void onDataChunk(List<T> chunk);

void processComplete();

}You can use create to bridge this into a Flux<T>:

Flux<String> bridge = Flux.create(sink -> {

myEventProcessor.register( (4)

new MyEventListener<String>() { (1)

public void onDataChunk(List<String> chunk) {

for(String s : chunk) {

sink.next(s); (2)

}

}

public void processComplete() {

sink.complete(); (3)

}

});

});| 1 | we bridge to the MyEventListener API |

| 2 | each element in a chunk becomes an element in the Flux. |

| 3 | the processComplete event is translated to an onComplete |

| 4 | all of this is done asynchronously whenever the myEventProcessor executes |

Additionally, since create can be asynchronous and manages backpressure, you

can refine how to behave backpressure-wise, by indicating an OverflowStrategy:

-

IGNOREto Completely ignore downstream backpressure requests. This may yieldIllegalStateExceptionwhen queues get full downstream. -

ERRORto signal anIllegalStateExceptionwhen the downstream can’t keep up -

DROPto drop the incoming signal if the downstream is not ready to receive it. -

LATESTto let downstream only get the latest signals from upstream. -

BUFFER(the default) to buffer all signals if the downstream can’t keep up. (this does unbounded buffering and may lead toOutOfMemoryError)

Mono also has a create generator. As you should expect, the

MonoSink of Mono’s create doesn’t allow several emissions. It will drop all

signals subsequent to the first one.

|

Push model

A variant of create is push, which is suitable for processing events

from a single producer. Similar to create, push can also be asynchronous

and can manage backpressure using any of the overflow strategies supported

by create. But only one producing thread may invoke next, complete or

error at a time.

Flux<String> bridge = Flux.push(sink -> {

myEventProcessor.register(

new SingleThreadEventListener<String>() { (1)

public void onDataChunk(List<String> chunk) {

for(String s : chunk) {

sink.next(s); (2)

}

}

public void processComplete() {

sink.complete(); (3)

}

public void processError(Throwable e) {

sink.error(e); (4)

}

});

});| 1 | we bridge to the SingleThreadEventListener API |

| 2 | events are pushed to sink using next from a single listener thread |

| 3 | complete event generated from the same listener thread |

| 4 | error event also generated from the same listener thread |

Hybrid push/pull model

Unlike push, create may be used in push or pull mode, making it suitable

for bridging with listener-based APIs where data may be delivered asynchronously

at any time. onRequest callback can be registered on FluxSink to track requests.

The callback may be used to request more data from source if required and to manage

backpressure by delivering data to sink only when requests are pending. This enables

a hybrid push/pull model where downstream can pull data that is already available

from upstream and upstream can push data to downstream when data becomes available

at a later time.

Flux<String> bridge = Flux.create(sink -> {

myMessageProcessor.register(

new MyMessageListener<String>() {

public void onMessage(List<String> messages) {

for(String s : messages) {

sink.next(s); (3)

}

}

});

sink.onRequest(n -> {

List<String> messages = myMessageProcessor.request(n); (1)

for(String s : message) {

sink.next(s); (2)

}

});| 1 | Poll for messages when requests are made |

| 2 | If messages are available immediately, push them to sink |

| 3 | Remaining messages that arrive asynchronously later are also delivered |

Cleaning up

Two callbacks onDispose and onCancel are provided to perform any cleanup

on cancellation or termination. onDispose can be used to perform cleanup

when the Flux completes, errors out or is cancelled. 'onCancel can be

used to perform any action specific to cancellation prior to cleanup using

onDispose.

Flux<String> bridge = Flux.create(sink -> {

sink.onRequest(n -> channel.poll(n))

.onCancel(() -> channel.cancel()) (1)

.onDipose(() -> channel.close()) (2)

});| 1 | onCancel is invoked for cancel signal |

| 2 | onDispose is invoked for complete/error/cancel |

4.4.3. Handle

Both present in Mono and Flux, handle is a tiny bit different. It is an

instance method, meaning that it is chained on an existing source like common

operators.

It is close to generate, in the sense that it uses a SynchronousSink and

only allows one-by-one emissions.

But handle can be used to generate an arbitrary value out of each source

element, possibly skipping some elements. In that sense, it can serve as a

combination of map and filter.

As such, the signature of handle is handle(BiConsumer<T, SynchronousSink<R>>).

Let’s take an example: the reactive streams specification disallows null

values in a sequence. What if you want to perform a map but you want to use

a preexisting method as the map function, and said method sometimes returns null?

For instance, the following method:

public String alphabet(int letterNumber) {

if (letterNumber < 1 || letterNumber > 26) {

return null;

}

int letterIndexAscii = 'A' + letterNumber - 1;

return "" + (char) letterIndexAscii;

}Can be applied safely to a source of integers:

.Using handle for a "map and eliminate nulls" scenario

Flux<String> alphabet = Flux.just(-1, 30, 13, 9, 20)

.handle((i, sink) -> {

String letter = alphabet(i); (1)

if (letter != null) (2)

sink.next(letter); (3)

});

alphabet.subscribe(System.out::println);| 1 | map to letters |

| 2 | but if the "map function" returns null… |

| 3 | …filter it out by not calling sink.next |

Which will print out:

M I T

4.5. Schedulers

Reactor, like RxJava, can be considered concurrency agnostic. It doesn’t enforce a concurrency model but rather leave you, the developer, in command.

But that doesn’t prevent the library from helping you with concurrency…

In Reactor, the execution model and where the execution happens is determined by

the Scheduler that is used. A Scheduler is an interface that can abstract

a wide range of implementations. The Schedulers class has static methods that

give access to the following execution contexts:

-

the current thread (

Schedulers.immediate()) -

a single, reusable thread (

Schedulers.single()). Note that this method reuses the same thread for all callers, until the Scheduler is disposed. If you want a per-call dedicated thread, useSchedulers.newSingle()instead. -

an elastic thread pool (

Schedulers.elastic()). It will create new worker pools as needed, and reuse idle ones unless they stay idle for too long (default is 60s), in which case the workers are disposed. This is a good choice for I/O blocking work for instance. -

a fixed pool of workers that is tuned for parallel work (

Schedulers.parallel()). It will create as many workers as you have CPU cores. -

a time-aware scheduler capable of scheduling tasks in the future, including recurring tasks (

Schedulers.timer()).

Additionally, you can create a Scheduler out of any pre-existing

ExecutorService [4] using Schedulers.fromExecutorService(ExecutorService), and

also create new instances of the various scheduler types using newXXX methods.

| Operators are implemented using non-blocking algorithms that are tuned to facilitate the work-stealing that can happen in some Schedulers. |

Some operators use a specific Scheduler from Schedulers by default (and will

usually give you the option of providing a different one). For instance, calling

the factory method Flux.interval(Duration.ofMillis(300)) will produces a Flux<Long>

that ticks every 300ms. This is enabled by Schedulers.timer() by default.

Reactor offers two means of switching execution context (or Scheduler) in a

reactive chain: publishOn and subscribeOn. Both take a Scheduler and allow

to switch the execution context to that scheduler. But publishOn placement in

the chain matters, while subscribeOn's doesn’t. To understand that difference,

you first have to remember that Nothing happens until you subscribe().

In Reactor, when you chain operators you wrap as many Flux/Mono specific

implementations inside one another. And as soon as you subscribe, a chain of

Subscriber is created backward. This is effectively hidden from you and all

you can see is the outer layer of Flux (or Mono) and Subscription, but

these intermediate operator-specific subscribers are where the real work happens.

With that knowledge, let’s have a closer look at the two operators:

-

publishOnapplies as any other operator, in the middle of that subscriber chain. As such, it takes signals from downstream and replays them upstream, but executing the callback on a worker from the associatedScheduler. So it affects where the subsequent operators will execute (until another publishOn is chained in). -

subscribeOnrather applies to the subscription process, when that backward chain is constructed. As a consequence, no matter where you place thesubscribeOnin the chain, it is always the context of the source emission that is affected. However, this doesn’t affect the behavior of subsequent calls topublishOn: they will still switch the execution context for the part of the chain after them. Also, only the earliestsubscribeOncall in the chain is actually taken into account.

4.6. Handling Errors

| For a quick look at the available operators for error handling, see the relevant operator decision tree. |

In Reactive Streams, errors are terminal events. As soon as an error occurs, it

stop the sequence and gets propagated down the chain of operators to the last

step, the Subscriber you defined and its onError method.

Such errors should still be dealt with at the application level, for instance

by displaying an error notification in a UI, or sending a meaningful error

payload in a REST endpoint, so the subscriber’s onError method should always

be defined.

If not defined, onError will throw an UnsupportedOperationException.

You can further detect and triage it by the Exceptions.isErrorCallbackNotImplemented

method.

|

But Reactor also offers alternative means of dealing with errors in the middle of the chain, as error-handling operators.

Before you learn about error-handling operators, you must keep in

mind that any error in a reactive sequence is a terminal event. Even if an

error-handling operator is used, it doesn’t allow the original sequence to

continue, but rather converts the onError signal into the start of a new

sequence (the fallback one). As such it replaces the terminated sequence

upstream.

|

Let’s go through each mean of error handling one-by-one. When relevant we’ll

make a parallel with imperative world’s try patterns.

4.6.1. Error handling operators

You may be familiar with several ways of dealing with exceptions in a try/catch block. Most notably:

-

catch and return a static default value

-

catch and execute an alternative path (fallback method)

-

catch and dynamically compute a fallback value

-

catch, wrap to a

BusinessExceptionand re-throw -

catch, log an error specific message and re-throw

-

the

finallyblock to clean up resources, or a Java 7’s "try-with-resource" construct

All of these have equivalent in Reactor, in the form of error handling operators.

Before looking into these operators, let’s first try to establish a parallel between a reactive chain and a try-catch block.

When subscribing, the onError callback at the end of the chain is akin to a catch

block. There, execution skips to the catch in case an Exception is thrown:

Flux<String> s = Flux.range(1, 10)

.map(v -> doSomethingDangerous(v)) (1)

.map(v -> doSecondTransform(v)); (2)

s.subscribe(value -> System.out.println("RECEIVED " + value), (3)

error -> System.err.println("CAUGHT " + error) (4)

);| 1 | a transformation is performed that can throw an exception. |

| 2 | if everything went well, a second transformation is performed. |

| 3 | each successfully transformed value is printed out. |

| 4 | in case of an error, the sequence terminates and an error message is displayed. |

This is conceptually similar to the following try/catch block:

try {

for (int i = 1; i < 11; i++) {

String v1 = doSomethingDangerous(i); (1)

String v2 = doSecondTransform(v1); (2)

System.out.println("RECEIVED " + v2);

}

} catch (Throwable t) {

System.err.println("CAUGHT " + t); (3)

}| 1 | if an exception is thrown here… |

| 2 | …the rest of the loops is skipped… |

| 3 | …and the execution goes straight to here. |

Now that we’ve established a parallel, we’ll look at the different error handling cases and their equivalent operators.

Static fallback value

The equivalent of (1) is onErrorReturn:

Flux.just(10)

.map(this::doSomethingDangerous)

.onErrorReturn("RECOVERED");You also have the option of filtering when to recover with a default value vs letting the error propagate, depending on the exception that occurred:

Flux.just(10)

.map(this::doSomethingDangerous)

.onErrorReturn(e -> e.getMessage().equals("boom10"), "recovered10");Fallback method

If you want more than a single default value and you have an alternative safer

way of processing your data, you can use onErrorResume. This would be the

equivalent of (2).

For example, if your nominal process is fetching data from an external unreliable service, but you also keep a local cache of the same data that can be a bit more out of date but is more reliable, you could do the following:

Flux.just("key1", "key2")

.flatMap(k ->

callExternalService(k) (1)

.onErrorResume(e -> getFromCache(k)) (2)

);| 1 | for each key, we asynchronously call the external service. |

| 2 | if the external service call fails, we fallback to the cache for that key. Note we

always apply the same fallback, whatever the source error e is. |

Like onErrorReturn, onErrorResume has variants that let you filter which exceptions

to fallback on, based either on the exception’s class or a Predicate. The fact that it

takes a Function also allows you to choose a different fallback sequence to switch to,

depending on the error encountered:

Flux.just("timeout1", "unknown", "key2")

.flatMap(k ->

callExternalService(k)

.onErrorResume(error -> { (1)

if (error instanceof TimeoutException) (2)

return getFromCache(k);

else if (error instanceof UnknownKeyException) (3)

return registerNewEntry(k, "DEFAULT");

else

return Flux.error(error); (4)

})

);| 1 | The function allows to dynamically choose how to continue. |

| 2 | If the source times out, let’s hit the local cache. |

| 3 | If the source says the key is unknown, let’s create a new entry. |

| 4 | In all other cases, "re-throw". |

4.6.2. Dynamic fallback value

Even if you don’t have an alternative safer way of processing your data, you might want to compute a fallback value out of the exception you received ((3)).

For instance, if your return type has a variant dedicated to holding an exception

(think Future.complete(T success) vs Future.completeExceptionally(Throwable error)),

you could simply instantiate the error-holding variant and pass the exception.

This can be done in the same way than the fallback method solution, using onErrorResume.

You just need a tiny bit of boilerplate:

erroringFlux.onErrorResume(error -> Mono.just( (1)

myWrapper.fromError(error) (2)

));| 1 | The boilerplate secret sauce is to use Mono.just with onErrorResume |

| 2 | You then wrap the exception into the adhoc class, or otherwise compute the value out of the exception… |

Catch and rethrow

Back in the "fallback method" example, the last line inside the flatMap gives us an hint

as to how item (4) (catch wrap and rethrow) could be achieved:

Flux.just("timeout1")

.flatMap(k -> callExternalService(k)

.onErrorResume(original -> Flux.error(

new BusinessException("oops, SLA exceeded", original))

)

);But actually, there is a more straightforward way of achieving the same with onErrorMap:

Flux.just("timeout1")

.flatMap(k -> callExternalService(k)

.onErrorMap(original -> new BusinessException("oops, SLA exceeded", original))

);Log or react on the side

For cases where you want the error to continue propagating, but you still want

to react to it without modifying the sequence (for instance logging it like in

item (5)), there is the doOnError operator. This operator as well as all

doOn prefixed operators are sometimes referred to as a "side-effect". That is

because they allow to peek inside the sequence’s events without modifying them.

The example below makes use of that to ensure that when we fallback to the cache, we at least log that the external service had a failure. We could also imagine we have statistic counters to increment as an error side-effect…

LongAdder failureStat = new LongAdder();

Flux<String> flux =

Flux.just("unknown")

.flatMap(k -> callExternalService(k) (1)

.doOnError(e -> {

failureStat.increment();

log("uh oh, falling back, service failed for key " + k); (2)

})

.onErrorResume(e -> getFromCache(k)) (3)

);| 1 | the external service call that can fail… |

| 2 | is decorated with a logging side-effect… |

| 3 | and then protected with the cache fallback. |

Using resources and the finally block

The last parallel to draw with the imperative world is the cleaning up that can

be done either via a Java 7 "try-with-resources" construct or the use of the

finally block ((6)). Both have their Reactor equivalent, actually: using

and doFinally:

AtomicBoolean isDisposed = new AtomicBoolean();

Disposable disposableInstance = new Disposable() {

@Override

public void dispose() {

isDisposed.set(true); (4)

}

@Override

public String toString() {

return "DISPOSABLE";

}

};

Flux<String> flux =

Flux.using(

() -> disposableInstance, (1)

disposable -> Flux.just(disposable.toString()), (2)

Disposable::dispose (3)

);| 1 | The first lambda generates the resource. Here we return our mock Disposable. |

| 2 | The second lambda processes the resource, returning a Flux<T>. |

| 3 | The third lambda is called when the flux from 2) terminates or is cancelled, to clean up resources. |

| 4 | After subscription and execution of the sequence, the isDisposed atomic boolean would become true. |

On the other hand, doFinally is about side-effects that you want to be executed

whenever the sequence terminates, either with onComplete, onError or a cancel.

It gives you a hint as to what kind of termination triggered the side-effect:

LongAdder statsCancel = new LongAdder(); (1)

Flux<String> flux =

Flux.just("foo", "bar")

.doFinally(type -> {

if (type == SignalType.CANCEL) (2)

statsCancel.increment(); (3)

})

.take(1); (4)| 1 | We assume we want to gather statistics, here we use a LongAdder. |

| 2 | doFinally consumes a SignalType for the type of termination. |

| 3 | Here we increment statistics in case of cancellation only. |

| 4 | take(1) will cancel after 1 item is emitted. |

Demonstrating the terminal aspect of onError

In order to demonstrate that all these operators cause the upstream

original sequence to terminate when the error happens, let’s take a more visual

example with a Flux.interval. The interval operator ticks every x units of time

with an increasing Long:

Flux<String> flux =

Flux.interval(Duration.ofMillis(250))

.map(input -> {

if (input < 3) return "tick " + input;

throw new RuntimeException("boom");

})

.onErrorReturn("Uh oh");

flux.subscribe(System.out::println);

Thread.sleep(2100); (1)| 1 | Note that interval executes on the timer Scheduler by default.

Assuming we’d want to run that example in a main class, we add a sleep here so

that the application doesn’t exit immediately without any value being produced. |

This prints out, one line every 250ms:

tick 0 tick 1 tick 2 Uh oh

Even with one extra second of runtime, no more tick comes in from the interval.

The sequence was indeed terminated by the error.

Retrying

There is another operator of interest with regards to error handling, and you

might be tempted to use it in the case above. retry, as its name indicates,

allows to retry an erroring sequence.

But the caveat is that it works by re-subscribing to the upstream Flux. So

this is still in effect a different sequence, and the original one is still

terminated. To verify that, we can re-use the previous example and append a

retry(1) to retry once instead of the onErrorReturn:

Flux.interval(Duration.ofMillis(250))

.map(input -> {

if (input < 3) return "tick " + input;

throw new RuntimeException("boom");

})

.elapsed() (1)

.retry(1)

.subscribe(System.out::println,

System.err::println); (2)

Thread.sleep(2100); (3)| 1 | elapsed will associate each value with the duration since previous value

was emitted. |

| 2 | We also want to see when there is an onError |

| 3 | We have enough time for our 4x2 ticks |

This prints out:

259,tick 0 249,tick 1 251,tick 2 506,tick 0 (1) 248,tick 1 253,tick 2 java.lang.RuntimeException: boom

| 1 | Here a new interval started, from tick 0. The additional 250ms duration is

coming from the 4th tick, the one that causes the exception and subsequent retry |

As you can see above, retry(1) merely re-subscribed to the original interval

once, restarting the tick from 0. The second time around, since the exception

still occurs, it gives up and propagate it downstream.

There is a more advanced version of retry that uses a "companion" flux to tell

whether or not a particular failure should retry: retryWhen. This companion

flux is created by the operator but decorated by the user, in order to customize

the retry condition.

The companion flux is a Flux<Throwable> that gets passed to a Function, the

sole parameter of retryWhen. As the user, you define that function and make it

return a new Publisher<?>. Retry cycles will go like this:

-

each time an error happens (potential for a retry), the error is emitted into the companion flux. That flux has been originally decorated by your function.

-

If the companion flux emits something, a retry happens.

-

If the companion flux completes, the retry cycle stops and the original sequence completes too.

-

If the companion flux errors, the retry cycle stops and the original sequence stops too. or completes, the error causes the original sequence to fail and terminate.

The distinction between the last two cases is important. Simply completing the

companion would effectively swallow an error. Consider the following attempt

at emulating retry(3) using retryWhen:

Flux<String> flux =

Flux.<String>error(new IllegalArgumentException()) (1)

.doOnError(System.out::println) (2)

.retryWhen(companion -> companion.take(3)); (3)| 1 | This continuously errors, calling for retry attempts |

| 2 | doOnError before the retry will let us see all failures |

| 3 | Here we just consider the first 3 errors as retry-able (take(3)), then give up. |

In effect, this results in an empty flux, but that completes successfully.

Since retry(3) on the same flux would have terminated with the latest error,

this is not entirely the same…

Getting to the same behavior involves a few additional tricks:

Flux<String> flux =

Flux.<String>error(new IllegalArgumentException())

.retryWhen(companion -> companion

.zipWith(Flux.range(1, 4), (1)

(error, index) -> { (2)

if (index < 4) return index; (3)

else throw Exceptions.propagate(error); (4)

})

);| 1 | Trick one: use zip and a range of "number of acceptable retries + 1"… |

| 2 | The zip function will allow to count the retries while keeping track of the original error. |

| 3 | To allow for 3 retries, indexes before 4 return a value to emit… |

| 4 | …but in order to terminate the sequence in error, we throw the original exception after these 3 retries. |

| A similar code can be used to implement an exponential backoff and retry pattern, as shown in the FAQ. |

4.6.3. How are exceptions in operators or functions handled?

In general, all operators can themselves contain code that potentially trigger an exception, or calls a user-defined callback that similarly can fail, so they all contain some form of error handling.

As a rule of thumb, an Unchecked Exception will always be propagated through

onError. For instance, throwing a RuntimeException inside a map function

will translate to an onError event:

Flux.just("foo")

.map(s -> { throw new IllegalArgumentException(s); })

.subscribe(v -> System.out.println("GOT VALUE"),

e -> System.out.println("ERROR: " + e));This would print out:

ERROR: java.lang.IllegalArgumentException: foo

Reactor however defines a set of exceptions that are always deemed fatal[5] , meaning that Reactor cannot keep operating. These are thrown rather than propagated.

Internally There are also cases where an unchecked exception still

cannot be propagated, most notably during the subscribe and request phases, due

to concurrency races that could lead to double onError/onComplete. When these

races happen, the error that cannot be propagated is "dropped". These cases can

still be managed to some extent, as the error goes through the

Hooks.onErrorDropped customizable hook.

|

You may wonder, what about Checked Exceptions?

If, say, you need to call some method that declares it throws exceptions, you

will still have to deal with said exceptions in a try/catch block. You have

several options, though:

-

catch the exception and recover from it, the sequence continues normally.

-

catch the exception and wrap it into an unchecked one, then throw it (interrupting the sequence). The

Exceptionsutility class can help you with that (see below). -

if you’re expected to return a

Flux(eg. you’re in aflatMap), just wrap the exception into an erroring flux:return Flux.error(checkedException). (the sequence also terminates)

Reactor has an Exceptions utility class that you can use, notably to ensure

that exceptions are wrapped only if they are checked exceptions:

-

use the

Exceptions.propagatemethod to wrap exceptions if necessary. It will also callthrowIfFatalfirst, and won’t wrapRuntimeException. -

use the

Exceptions.unwrapmethod to get the original unwrapped exception (going back to the root cause of a hierarchy of reactor-specific exceptions).

Let’s take the example of a map that uses a conversion method that can throw

an IOException:

public String convert(int i) throws IOException {

if (i > 3) {

throw new IOException("boom " + i);

}

return "OK " + i;

}Now imagine you want to use that method in a map. You now have to explicitly

catch the exception, and your map function cannot re-throw it. So you can

propagate it to map’s onError as a RuntimeException:

Flux<String> converted = Flux

.range(1, 10)

.map(i -> {

try { return convert(i); }

catch (IOException e) { throw Exceptions.propagate(e); }

});Later on, when subscribing to the above flux and reacting to errors, eg. in the UI, you could revert back to the original exception in case you want to do something special for IOExceptions:

converted.subscribe(

v -> System.out.println("RECEIVED: " + v),

e -> {

if (Exceptions.unwrap(e) instanceof IOException) {

System.out.println("Something bad happened with I/O");

} else {

System.out.println("Something bad happened");

}

}

);4.7. Processor

Processors are a special kind of Publisher that are also a Subscriber. That

means that you can subscribe to a Processor (generally, they implement

Flux), but also call methods to manually inject data into the sequence or

terminate it…

There are several kind of Processors, each with a few particular semantics, but before you start looking into these, you need to ask yourself the following question:

4.7.1. Do I need a Processor?

Most of the time, you should try to avoid using a Processor. They are harder

to use correctly and prone to some corner cases.

So if you think a Processor could be a good match for your use-case, ask

yourself if you have tried these two alternatives before:

-

could a classic operator or combination of operators fit the bill? (see Which operator do I need?)

-

could a generator operator work instead? (generally these operators are made to bridge APIs that are not reactive, providing a "sink" that is very similar in concept to a

Processorin the sense that it allows you to populate the sequence with data, or terminate it).

If after exploring the above alternatives you still think you need a Processor,

head to the Choosing the right Processor appendix to learn about the different implementations.

4.7.2. Producing from multiple threads

FluxProcessor sinks safely gate multi-threaded producers and can be used

by applications that generate data from multiple threads concurrently.

For example, a thread-safe serialized sink can be created for UnicastProcessor:

UnicastProcessor<Integer> processor = UnicastProcessor.create();

FluxSink<Integer> sink = processor.sink(overflowStrategy);Multiple producer threads may concurrently generate data on this serialized sink:

sink.next(n);Overflow from next will behave in two possible ways depending on the Processor:

-

an unbounded processor will handle the overflow itself by dropping or buffering

-

a bounded processor will block/spin on

IGNOREstrategy, or apply theoverflowStrategybehavior specified forsink.

5. Which operator do I need?

In this section, if an operator is specific to Flux or Mono it will be

prefixed accordingly, common operators have no prefix. When a specific use case

is covered by a combination of operators, it is presented as a method call, with

leading dot and parameters in parenthesis, like .methodCall().

|

I want to deal with: Creating a new sequence…, An existing sequence, Peeking into a sequence,

Errors, Time, Splitting a Flux or Going back to the Synchronous world.

5.1. Creating a new sequence…

-

that emits a

TI already have:just-

…from an

Optional<T>:Mono#justOrEmpty(Optional<T>) -

…from a potentially

nullT:Mono#justOrEmpty(T)

-

-

that emits a

Treturned by a method:justas well-

…but lazily captured: use

Mono#fromSupplieror wrapjustinsidedefer

-

-

that emits several

TI can explicitly enumerate:Flux#just(T...) -

that iterates over…

-

an array:

Flux#fromArray -

a collection / iterable:

Flux#fromIterable -

a range of integers:

Flux#range

-

-

that emits from various single-valued sources like…

-

a

Supplier<T>:Mono#fromSupplier -

a task:

Mono#fromCallable,Mono#fromRunnable -

a

CompletableFuture<T>:Mono#fromFuture

-

-

that completes:

empty -

that errors immediately:

error -

that never does anything:

never -

that is decided at subscription:

defer -

that depends on a disposable resource:

using -

that generates events programmatically (can use state)…

-

synchronously and one-by-one:

Flux#generate -

asynchronously (can also be sync), multiple emissions possible in one pass:

Flux#create(Mono#createas well, without the multiple emission aspect)

-

5.2. An existing sequence

-

I want to transform existing data…

-

on a 1-to-1 basis (eg. strings to their length):

map-

…by just casting it:

cast

-

-

on a 1-to-n basis (eg. strings to their characters):

flatMap+ use a factory method -

on a 1-to-n basis with programmatic behavior for each source element and/or state:

handle -

running an asynchronous task for each source item (eg. urls to http request):

flatMap+ an asyncPublisher-returning method-

…ignoring some data: conditionally return a

Mono.empty()in the flatMap lambda -

…retaining the original sequence order:

Flux#flatMapSequential(this triggers the async processes immediately but reorders the results) -

…where the async task can return multiple values, from a

Monosource:Mono#flatMapMany

-

-

-

I want to aggregate a

Flux… (theFlux#prefix is assumed below)-

into a List:

collectList,collectSortedList -

into a Map:

collectMap,collectMultiMap -

into an arbitrary container:

collect -

into the size of the sequence:

count -

by applying a function between each element (eg. running sum):

reduce-

…but emitting each intermediary value:

scan

-

-

into a boolean value from a predicate…

-

applied to all values (AND):

all -

applied to at least one value (OR):

any -

testing the presence of any value:

hasElements -

testing the presence of a specific value:

hasElement

-

-

-

I want to combine publishers…

-

in sequential order:

Flux#concat/.concatWith(other)-

…but delaying any error until remaining publishers have been emitted:

Flux#concatDelayError -

…but eagerly subscribing to subsequent publishers:

Flux#mergeSequential

-

-

in emission order (combined items emitted as they come):

Flux#merge/.mergeWith(other)-

…with different types (transforming merge):

Flux#zip/Flux#zipWith

-

-

by pairing values…

-

from 2 Monos into a

Tuple2:Mono#and -

from n Monos when they all completed:

Mono#when -

into an arbitrary container type…

-

each time all sides have emitted:

Flux#zip(up to the smallest cardinality) -

each time a new value arrives at either side:

Flux#combineLatest

-

-

-

only considering the sequence that emits first:

Flux#firstEmitting,Mono#first,mono.or(otherMono).or(thirdMono) -

triggered by the elements in a source sequence:

switchMap(each source element is mapped to a Publisher) -

triggered by the start of the next publisher in a sequence of publishers:

switchOnNext

-

-

I want to repeat an existing sequence:

repeat-

…but at time intervals:

Flux.interval(duration).flatMap(tick -> myExistingPublisher)

-

-

I have an empty sequence but…

-

I want a value instead:

defaultIfEmpty -

I want another sequence instead:

switchIfEmpty

-

-

I have a sequence but I’m not interested in values:

ignoreElements-

…and I want the completion represented as a

Mono:then -

…and I want to wait for another task to complete at the end:

thenEmpty -

…and I want to switch to another

Monoat the end:Mono#then(mono) -

…and I want to switch to a

Fluxat the end:thenMany

-

-

I have a Mono for which I want to defer completion…

-

…only when 1-N other publishers have all emitted (or completed):

Mono#delayUntilOther-

…and deriving these publishers from the Mono value:

Mono#delayUntil(Function)

-

-

5.3. Peeking into a sequence

-

Without modifying the final sequence, I want to…

-

get notified of / execute additional behavior [6] on…

-

emissions:

doOnNext -

completion:

Flux#doOnComplete,Mono#doOnSuccess(includes the result if any) -

error termination:

doOnError -

cancellation:

doOnCancel -

subscription:

doOnSubscribe -

request:

doOnRequest -

completion or error:

doOnTerminate(Mono version includes the result if any)-

but after it has been propagated downstream:

doAfterTerminate

-

-

any type of signal, represented as a

Signal:Flux#doOnEach -

any terminating condition (complete, error, cancel):

doFinally

-

-

log what happens internally:

log

-

-

I want to know of all events…

-

each represented as

Signalobject…-

in a callback outside the sequence:

doOnEach -

instead of the original onNext emissions:

materialize-

…and get back to the onNexts:

dematerialize

-

-

-

as a line in a log:

log

-

5.4. Filtering a sequence

-

I want to filter a sequence…

-

based on an arbitrary criteria:

filter-

…that is asynchronously computed:

filterWhen

-

-

restricting on the type of the emitted objects:

ofType -

by ignoring the values altogether:

ignoreElements -

by ignoring duplicates…

-

in the whole sequence (logical set):

Flux#distinct -

between subsequently emitted items (deduplication):

Flux#distinctUntilChanged

-

-

-

I want to keep only a subset of the sequence…

-

by taking elements…

-

at the beginning of the sequence:

Flux#take(int)-

…based on a duration:

Flux#take(Duration) -

…only the first element, as a

Mono:Flux#next()

-

-

at the end of the sequence:

Flux#takeLast -

until a criteria is met (inclusive):

Flux#takeUntil(predicate-based),Flux#takeUntilOther(companion publisher-based) -

while a criteria is met (exclusive):

Flux#takeWhile

-

-

by taking at most 1 element…

-

at a specific position:

Flux#elementAt -

at the end:

.takeLast(1)-

…and emit an error if empty:

Flux#last() -

…and emit a default value if empty:

Flux#last(T)

-

-

-

by skipping elements…

-

at the beginning of the sequence:

Flux#skip(int)-

…based on a duration:

Flux#skip(Duration)

-

-

at the end of the sequence:

Flux#skipLast -

until a criteria is met (inclusive):

Flux#skipUntil(predicate-based),Flux#skipUntilOther(companion publisher-based) -

while a criteria is met (exclusive):

Flux#skipWhile

-

-

by sampling items…

-

by duration:

Flux#sample(Duration)-

but keeping the first element in the sampling window instead of the last:

sampleFirst

-

-

by a publisher-based window:

Flux#sample(Publisher) -

based on a publisher "timing out":